Running the MCMC

Last updated: 2022-08-13

Checks: 7 0

Knit directory: workflowr/

This reproducible R Markdown analysis was created with workflowr (version 1.7.0). The Checks tab describes the reproducibility checks that were applied when the results were created. The Past versions tab lists the development history.

Great! Since the R Markdown file has been committed to the Git repository, you know the exact version of the code that produced these results.

Great job! The global environment was empty. Objects defined in the global environment can affect the analysis in your R Markdown file in unknown ways. For reproduciblity it’s best to always run the code in an empty environment.

The command set.seed(20190717) was run prior to running

the code in the R Markdown file. Setting a seed ensures that any results

that rely on randomness, e.g. subsampling or permutations, are

reproducible.

Great job! Recording the operating system, R version, and package versions is critical for reproducibility.

Nice! There were no cached chunks for this analysis, so you can be confident that you successfully produced the results during this run.

Great job! Using relative paths to the files within your workflowr project makes it easier to run your code on other machines.

Great! You are using Git for version control. Tracking code development and connecting the code version to the results is critical for reproducibility.

The results in this page were generated with repository version f1a7b55. See the Past versions tab to see a history of the changes made to the R Markdown and HTML files.

Note that you need to be careful to ensure that all relevant files for

the analysis have been committed to Git prior to generating the results

(you can use wflow_publish or

wflow_git_commit). workflowr only checks the R Markdown

file, but you know if there are other scripts or data files that it

depends on. Below is the status of the Git repository when the results

were generated:

Ignored files:

Ignored: .DS_Store

Ignored: .Rproj.user/

Ignored: analysis/DNase_example_cache/

Ignored: data/DHS_Index_and_Vocabulary_hg38_WM20190703.txt

Ignored: data/DNase_chr21/

Ignored: data/DNase_chr22/

Ignored: output/DNase/

Untracked files:

Untracked: data/DHS_Index_and_Vocabulary_hg38_WM20190703.txt.gz

Untracked: data/DHS_Index_and_Vocabulary_metadata.xlsx

Unstaged changes:

Modified: analysis/.DS_Store

Modified: analysis/pairwise_fitting_cache/html/__packages

Deleted: analysis/pairwise_fitting_cache/html/unnamed-chunk-3_49e4d860f91e483a671b4b64e8c81934.RData

Deleted: analysis/pairwise_fitting_cache/html/unnamed-chunk-3_49e4d860f91e483a671b4b64e8c81934.rdb

Deleted: analysis/pairwise_fitting_cache/html/unnamed-chunk-3_49e4d860f91e483a671b4b64e8c81934.rdx

Deleted: analysis/pairwise_fitting_cache/html/unnamed-chunk-6_4e13b65e2f248675b580ad2af3613b06.RData

Deleted: analysis/pairwise_fitting_cache/html/unnamed-chunk-6_4e13b65e2f248675b580ad2af3613b06.rdb

Deleted: analysis/pairwise_fitting_cache/html/unnamed-chunk-6_4e13b65e2f248675b580ad2af3613b06.rdx

Modified: analysis/preprocessing_cache/html/__packages

Deleted: analysis/preprocessing_cache/html/unnamed-chunk-11_d0dcbf60389f2e00d36edbf7c0da270d.RData

Deleted: analysis/preprocessing_cache/html/unnamed-chunk-11_d0dcbf60389f2e00d36edbf7c0da270d.rdb

Deleted: analysis/preprocessing_cache/html/unnamed-chunk-11_d0dcbf60389f2e00d36edbf7c0da270d.rdx

Modified: data/tpm_zebrafish.tsv.gz

Modified: output/.DS_Store

Note that any generated files, e.g. HTML, png, CSS, etc., are not included in this status report because it is ok for generated content to have uncommitted changes.

These are the previous versions of the repository in which changes were

made to the R Markdown (analysis/running_mcmc.Rmd) and HTML

(docs/running_mcmc.html) files. If you’ve configured a

remote Git repository (see ?wflow_git_remote), click on the

hyperlinks in the table below to view the files as they were in that

past version.

| File | Version | Author | Date | Message |

|---|---|---|---|---|

| Rmd | f1a7b55 | Hillary Koch | 2022-08-13 | add DNase analysis |

| html | f1a7b55 | Hillary Koch | 2022-08-13 | add DNase analysis |

| Rmd | e4f6d19 | Hillary Koch | 2022-07-30 | update docs |

| html | e4f6d19 | Hillary Koch | 2022-07-30 | update docs |

| Rmd | 21aa678 | Hillary Koch | 2022-07-30 | clear run mcmc cache |

| Rmd | 38dabc1 | Hillary Koch | 2022-07-30 | reformat gelman rubin part |

| html | 38dabc1 | Hillary Koch | 2022-07-30 | reformat gelman rubin part |

| Rmd | fc7424f | Hillary Koch | 2022-07-30 | add gelman rubin example |

| html | fc7424f | Hillary Koch | 2022-07-30 | add gelman rubin example |

| Rmd | c1e13d0 | Hillary Koch | 2022-07-30 | working with new computer |

| html | c1e13d0 | Hillary Koch | 2022-07-30 | working with new computer |

—This is likely the most time-consuming step of CLIMB analysis—

The final step of CLIMB involves doing inference on the parsimonious Gaussian mixture using MCMC. MCMC is an iterative method, and thus the user needs to specify how many iterations to use. We recommend running a quick pilot analysis–say, for 10 iterations. This pilot analysis will give a good idea of how long an analysis will need to run for a given larger number of iterations (say, 20,000 iterations).

You can run an MCMC simply with the function run_mcmc().

This function calls a script written in Julia, and executes everything at the

default settings in the CLIMB methodology. The user needs to provide 4

arguments:

dat: the input data you’ve been using throughout the analysishyp: the hyperparameter values estimated in the previous stepnstep: number of MCMC iterations to runretained_classes: the parsimonious list of candidate latent classes, after finally filtering out by prior weights as done in the previous step

First, we load in our data, list of candidate latent classes, and estimated hyperparameters.

data("sim")

load("output/hyperparameters.Rdata")

retained_classes <- readr::read_tsv("output/retained_classes.txt", col_names = FALSE)Now we are ready to launch an MCMC:

set.seed(100)

results <- run_mcmc(sim$data, hyp = hyp, nstep = 1000, retained_classes = retained_classes)

out <- extract_chains(results)Running the MCMC...100%|████████████████████████████████| Time: 0:02:14

The object results contains 3 objects:

chain: the estimate parameters over the course ofnstepiterationsacceptane_rate_chain: an \(M\times\)nstepmatrix of the acceptance rates for each cluster covariance. The proposals for each cluster are adaptively tuned such that the acceptance rates converge to about 0.3tune_df_chain: the tuning degrees of freedom across the chain, adjusted to yield optimal acceptance rates

results is effectively a Julia object, so the first

thing you should do with this object is to extract the data for R’s

use:

out will contain all the different chains from the MCMC.

For example, you can check the MCMC trace plots. Here is the trace plot

of the mean from the first cluster in the third dimension:

plot(out$mu_chains[[1]][,3], type = "l", xlab = "iteration", ylab = expression(mu[3]))

More specifically, extract_chains() returns a list with

4 elements. First, recall that M is the number of input

classes, D is the dimension of the data, and let

iterations be nstep+1. The output from

extract_chains() contains:

mu_chains: a list withMelements, each element a matrix of dimensioniterations x D.mu_chains[[i]]is the MCMC samples for the mean vector of clusteri.Sigma_chains: a list withMelements, each element an array of dimensionD x D x iterations.Sigma_chains[[i]]is the MCMC samples for the covariance matrix of clusteri.prop_chain: A matrix of dimensionM x iterations, containing the MCMC samples for the mixing proportions of each class.z_chain: A matrix of dimensionn x iterations, containing the MCMC samples for the class labels of each observation. These labels correspond to the row indices ofretained_classes(above).

These posterior samples can be used for many downstream analyses.

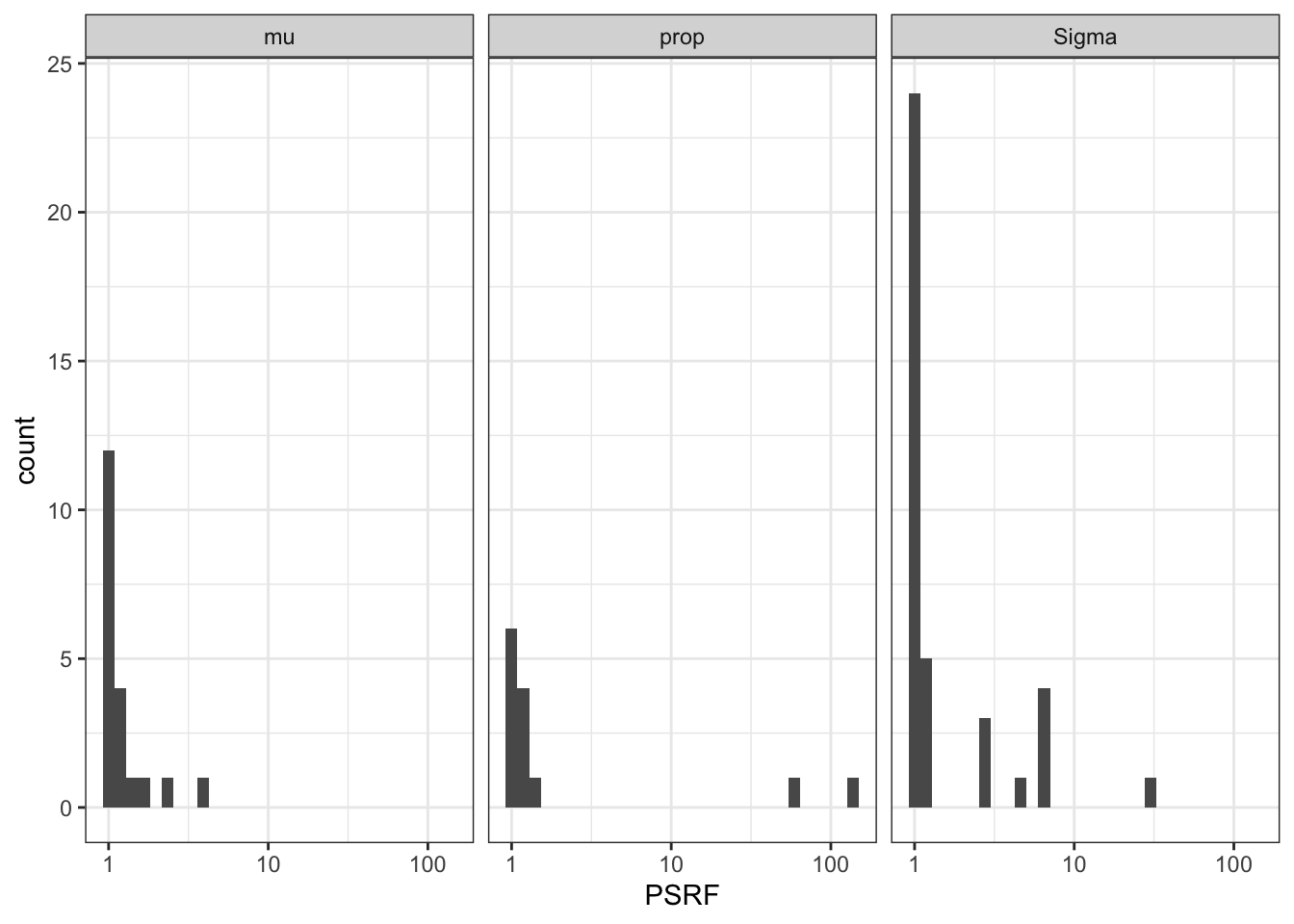

Multiple parallel chains for assessing convergence

In addition to viewing trace plots, one could run multiple parallel

MCMC chains in order to assess convergence quantitatively via the

Gelman-Rubin convergence diagnostic. The code below replicates the

previous MCMC 3 times, and calculates the potential scale reduction

factors (PSRFs) for each parameter estimate. Ideally, each PSRF should

be close to 1. Note that calculate_gelmanRubin returns NA

for parameters associated with a 0 class label, since these parameters

do not actually get sampled during MCMC.

# Run 3 replicate MCMC chains

results3 <- purrr::map(1:3, ~ run_mcmc(sim$data, hyp = hyp, nstep = 1000, retained_classes = retained_classes))

chain_list <- purrr::map(results3, extract_chains)

burnin_list <- rep(list(1:200), length(chain_list))

# calculate potential scale reduction factors for each parameter

PSRFs <- calculate_gelmanRubin(chain_list, burnin_list)

str(PSRFs)List of 3

$ PSRF_mu : num [1:13, 1:3] 1.13 1.01 1 NA NA ...

$ PSRF_Sigma: num [1:3, 1:3, 1:13] 1 NA 1.01 NA NA ...

$ PSRF_prop : num [1:13] 1.12 1 1 1.01 1.02 ...Below we plot histograms of the PSRFs for each parameter. Even after running these chains for a short time, we already have achieved good convergence properties. Unsurprisingly, cluster mean and proportion estimates converge faster than the covariance parameters. In practice, for larger and more complex datasets, chains will need to be run for longer than what was done here.

library(ggplot2)

purrr::map(PSRFs, ~ as.vector(.x) %>%

magrittr::extract(!is.na(.))) %>%

purrr::imap_dfr( ~ data.frame(parameter = .y, PSRF = .x)) %>%

ggplot(aes(x = PSRF)) +

geom_histogram() +

scale_x_continuous(trans = "log10") +

facet_wrap(~parameter, labeller = labeller(.cols = ~ gsub(pattern = "PSRF_", replacement = "", .x))) +

theme_bw()

Session Information

print(sessionInfo())R version 4.2.1 (2022-06-23)

Platform: aarch64-apple-darwin20 (64-bit)

Running under: macOS Monterey 12.5

Matrix products: default

BLAS: /Library/Frameworks/R.framework/Versions/4.2-arm64/Resources/lib/libRblas.0.dylib

LAPACK: /Library/Frameworks/R.framework/Versions/4.2-arm64/Resources/lib/libRlapack.dylib

locale:

[1] en_US.UTF-8/en_US.UTF-8/en_US.UTF-8/C/en_US.UTF-8/en_US.UTF-8

attached base packages:

[1] stats graphics grDevices utils datasets methods base

other attached packages:

[1] ggplot2_3.3.6 CLIMB_1.0.0 magrittr_2.0.3

loaded via a namespace (and not attached):

[1] Rcpp_1.0.9 mvtnorm_1.1-3 tidyr_1.2.0

[4] assertthat_0.2.1 rprojroot_2.0.3 digest_0.6.29

[7] foreach_1.5.2 utf8_1.2.2 R6_2.5.1

[10] plyr_1.8.7 evaluate_0.15 highr_0.9

[13] pillar_1.8.0 rlang_1.0.4 rstudioapi_0.13

[16] whisker_0.4 jquerylib_0.1.4 rmarkdown_2.14

[19] labeling_0.4.2 readr_2.1.2 stringr_1.4.0

[22] munsell_0.5.0 bit_4.0.4 compiler_4.2.1

[25] httpuv_1.6.5 xfun_0.31 pkgconfig_2.0.3

[28] htmltools_0.5.3 tidyselect_1.1.2 tibble_3.1.8

[31] workflowr_1.7.0 codetools_0.2-18 JuliaCall_0.17.4

[34] fansi_1.0.3 withr_2.5.0 crayon_1.5.1

[37] dplyr_1.0.9 tzdb_0.3.0 later_1.3.0

[40] brio_1.1.3 grid_4.2.1 gtable_0.3.0

[43] jsonlite_1.8.0 lifecycle_1.0.1 DBI_1.1.3

[46] git2r_0.30.1 scales_1.2.0 cli_3.3.0

[49] stringi_1.7.8 vroom_1.5.7 cachem_1.0.6

[52] farver_2.1.1 LaplacesDemon_16.1.6 fs_1.5.2

[55] promises_1.2.0.1 doParallel_1.0.17 testthat_3.1.4

[58] bslib_0.4.0 ellipsis_0.3.2 generics_0.1.3

[61] vctrs_0.4.1 iterators_1.0.14 tools_4.2.1

[64] bit64_4.0.5 glue_1.6.2 purrr_0.3.4

[67] hms_1.1.1 abind_1.4-5 parallel_4.2.1

[70] fastmap_1.1.0 yaml_2.3.5 colorspace_2.0-3

[73] knitr_1.39 sass_0.4.2